Why AI Demos Work and Your Business Doesn't (Yet): The Context Gap Nobody Explains

If you have tried AI and felt like it worked for everyone except you, there is a structural reason for that. It is not a personal failure. It is a product design reality.

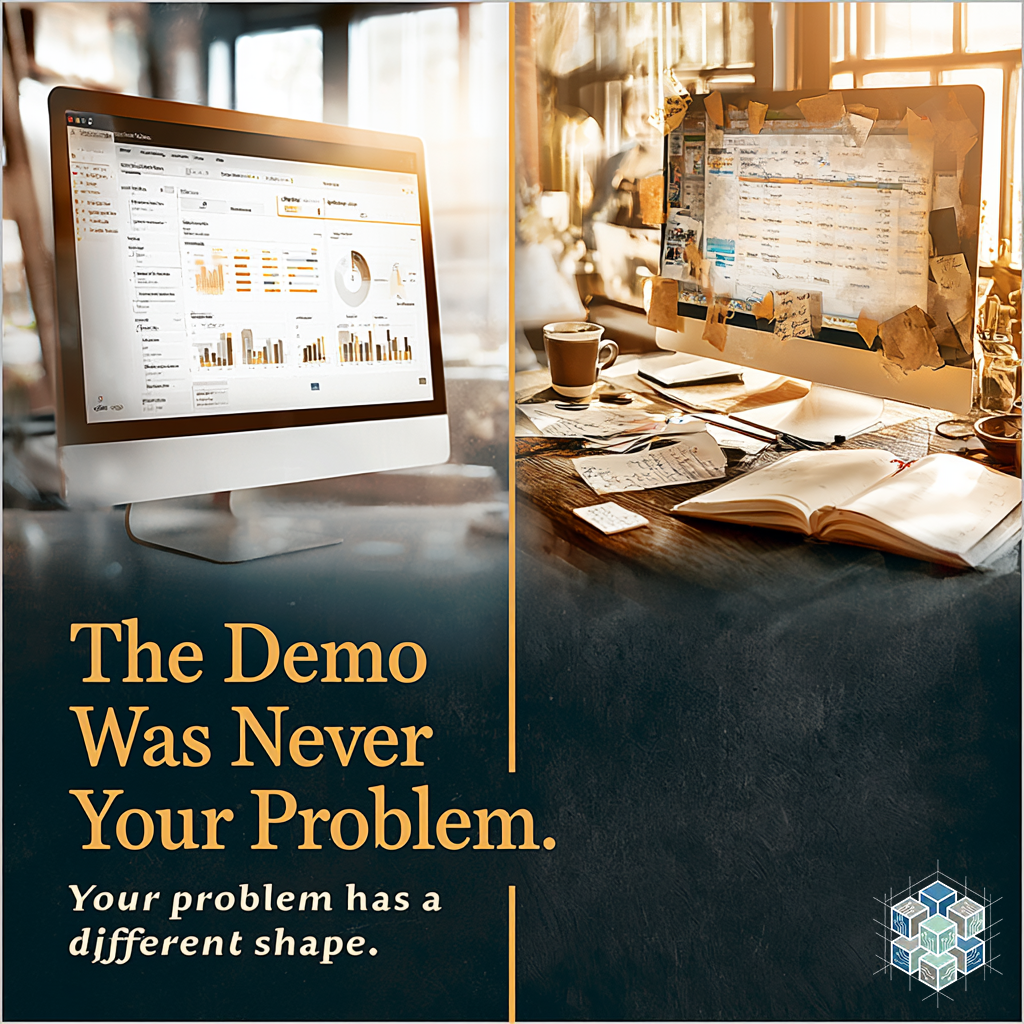

AI demos are built to impress. This is not criticism; it is simply what they are for. A demo selects a use case that shows the tool performing well under favorable conditions. The input data is clean. The scenario is linear. The output is exactly what you would want, produced in a fraction of the expected time. The impression is that this is what using the tool is like.

It is not.

Using a tool inside a real business means dealing with ambiguous inputs. It means client data that doesn't fit a clean format. It means workflows with exceptions, history, and judgment calls embedded in them. It means limited time and limited patience for troubleshooting. It means the problem you're solving is probably not as well-defined as the demo's problem was.

The gap between those two realities is not a capability gap. It is a context gap.

When small business owners walk away from an AI tool feeling like it didn't work for them, the most common explanation they reach for is personal: they set it up wrong, or they needed more training, or they're just not technical enough. All of those explanations preserve the tool's reputation while placing the failure on the user.

A more accurate explanation: the tool was applied to the wrong problem, or to a problem that wasn't specific enough to be solvable.

There is a question that cuts through this. Before you choose any AI tool, before you watch any demo, ask yourself: what is the specific thing in my business that costs me repeatable effort every week? Not a vague area. A specific thing. Something that happens on a schedule, requires the same type of thinking, produces a similar output each time, and takes more time than it should.

That specificity is what makes AI useful. Not because specificity makes the tool better, but because it gives you a real standard for whether the tool is working. If you know what the output should look like, you can evaluate the tool's output against it. If you know how long the task should take, you can measure whether the tool is actually saving time.

Without that anchor, the demo succeeds and your implementation fails because they were never solving the same problem.

The fix is available to anyone. It requires no technical skill. It requires thirty minutes and a willingness to name one real friction point before you open the next browser tab. That friction point becomes your evaluation filter. Every tool either passes it or it doesn't.

That is a much smaller, more manageable conversation than "how do I get better at AI." And it produces real results instead of abandoned subscriptions.

#SmallBusiness #AIForBusiness #DigiBrix #QuietAI #EntrepreneurLife #BusinessOwner #ClarityFirst #WorkSmarter #SoloFounder